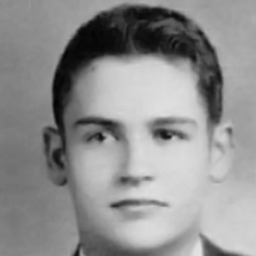

Women's most average style in the 1930s

The Big Data

of Big Hair

If a picture is worth 1,000 words, one could argue that the hairstyle in your yearbook photo is at least 990 of them. Hair is decade-defining — it’s hard to think of the 1930s without finger waves, the 1960s without beehives, the 1970s without afros, the 1990s without mullets, or the 2000s without frosted tips.

And, if you think of the 1980s, well, you probably think of hair teased to the heavens. After all, “the higher the hair, the closer to God.” But were the 1980s really when big hair reached its height? That’s where data comes in.

Using deep learning and neural network classifiers (more on this in the methodology), we looked at a dataset of more than 30,000 high school yearbook photos from 1930–2013 to see what should really be crowned the “Big Hair Era.”

Watch the video above and explore median hair size in the chart below to see what we found.

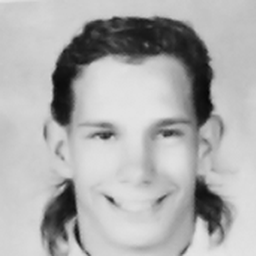

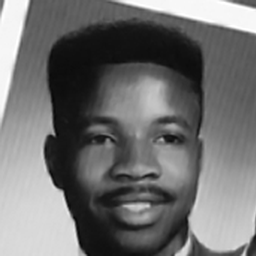

Men's most average style in the 1930s

Methods

Dataset

This story uses a public dataset of 37,921 American high school yearbook photos from the years 1905-2013, created and published by Shiry Ginosar, Kate Rakelly, Sarah Sachs, Brian Yin, and Alexei A. Efros. All faces are front-facing and aligned by eye position. Complete details about the creation of this dataset can be found in an article by the authors.

Image processing pipeline

In order to analyse hair in the yearbook dataset, we created a processing pipeline with three stages:

- Hair segmentation. Identify the pixels in a portrait that correspond to hair.

- Feature detection. Summarize important features of the hairstyle.

- Analyze features. Investigate how hairstyles change over time using targeted analyses of the hairstyle features we’ve created.

Hair segmentation

Identifying the hair in a portrait is an example of semantic segmentation, a challenging problem in computer science. In our case, the computer’s task is to accurately identify whether each pixel in an image is a hair pixel or not. We leveraged an existing approach from deep learning, called a U-Net, which has shown promising results in biomedical image segmentation (e.g., identifying a tumor or lesion in a scan).

We adopted a popular U-Net architecture for this story (code here). To train the U-Net to segment hair, we required a labeled dataset containing images similar to the yearbook images. The closest existing dataset is the Figaro1K, which consists of 1,050 labeled images containing hair of many textures, colors, and styles. However, this dataset does not contain historical images, and differs considerably from yearbook photos in terms of image composition and possibly many other features, such as contrast, dynamic range, and sharpness. We expanded the training dataset by hand-segmenting over 400 pictures from a custom dataset of historical yearbook images from archive.org (because the segemntation model was trained before the author discovered the well-curated Ginosar et al. yearbook dataset used for this story! Kids, always try google a few different ways before making your own dataset). An augmented training set of Figaro1K images and the hand-labeled yearbook photos were used to train the U-Net initially. After training, test images that were successfully labeled by the U-Net were manually selected and added to the training set for another pass. This ultimately yielded a final model trained on 3,065 images with segmentation masks.

The trained model was then used to generate hair maps for all images in the Ginosar et al. yearbook dataset.

Example images and hair maps:

There were several “failure modes” that proved challenging for the classifier.

Failure modes. First, overexposed highlights. Second, low contrast between ear and hair. Third, low contrast between hair and background.

At this point, it was clear that due to lighting and composition choices common to yearbook photos from the 1920s and earlier, the U-Net especially struggled to segment hair in these images. Based on this challenge, we restricted the next stages of our analysis to images from the year 1930 and on. This left 37,026 images, or roughly 4,000 images per decade (on average).

Feature detection

In order to analyze changes in hairstyles, we needed a way to summarize hair features appropriately. After performing hair segmentation on each image in the dataset, we have a “hair map” that expresses the probability each pixel contains hair. Next, we used a deep learning approach called a Variational Autoencoder (VAE) to summarize the hair maps in four coordinates. A VAE is a neural network that learns to express features that vary maximally over the entire dataset, such as hair length (which varies from Morticia-Adams-long to military-buzz-cut-short) and height (from buzz to beehive). Because the VAE is trained on hair maps and not the original yearbook photos themselves, the results are less influenced by unrelated features such as skin color, facial features, or image grain. A downside of this approach is that hair maps may lose textural and color detail, so the VAE is largely insensitive to trends in hair color or subtle texture changes. The script to train the VAE is hosted on github.

Feature analysis

The complete set of scripts for the analyses conducted here are hosted on github, as well as the raw hairmaps and set of 4 features for each.

Representative looks for each decade: To estimate representative looks for each decade, we calculated the centroid of the cluster of images from that decade in 4-dimensional feature space. We selected the top 30 female- and male- tagged images closest to the centroid by Euclidean distance. From this subset, we manually curated images for display in the article based on image quality and to ensure a diverse representation of ethnicity.

Mullet, beehive and straight hair analysis: For each style, we trained a binary classifier to identify the target style (e.g., mullet or no mullet) on a labeled training set of yearbook photos. Note that yearbook photos, and not hair maps, are used so the classifier can leverage textural information. Each classifier has an identical architecture (see code for the mullet classifier here): features of each image in the training set are calculated using a hidden layer of the pretrained VGG-16 convolutional neural network. A single fully connected feed-forward layer is trained to take those features as input and yield a correct classification.

After training on a small subset of labeled images from the dataset, the classifiers were used to identify target hairstyles in the rest of the dataset. Manual inspection was used to confirm the machine’s selections (note that category boundaries can be challenging and at times subjective: the difference between a beehive and a bouffant is sometimes narrow). Once incidences of each target look were confirmed, the proportion of each look per year was calculated.

Analyzing looks by gender expression: Every image in the yearbook dataset is tagged as “male” or “female” using a combination of automated labeling and human review. It is essential to note that there is imperfection in both the accuracy of labeling, and the aptness of a binary tag— we know this is a simplification that does not entirely represent the complexity of gender identity. Because we cannot be sure what gender every high schooler in the dataset would have identified as, we can only approximate the gender most likely expressed in an image. As such, we can only make coarse estimations about how gender identity and hair are intertwined from this dataset.

One estimation we do attempt to make is how much hair styles identified with male- vs. female- presenting portraits have diverged and overlapped over time. To do this, we use the 4-dimensional coordinates assigned to each hairmap as features for distinguishing looks belonging to each class (male- and female- tagged images). For every year from 1930-2013, a random forest classifier was trained on the images from that year with 5-fold cross-validation. The results of these classifiers are plotted over time with Loess smoothing.

Hair size over time: For each image in the dataset, we estimate the density of hair by summing the probability of hair in each pixel (estimated by the u-net) of each hairmap and normalizing by the number of pixels per image (256 x 256). For each year in the dataset, we calculated the median hair density over all hairmaps. Our plot of hair size shows a Loess-smoothed curve fitted to the median hair density from 1930-2013.